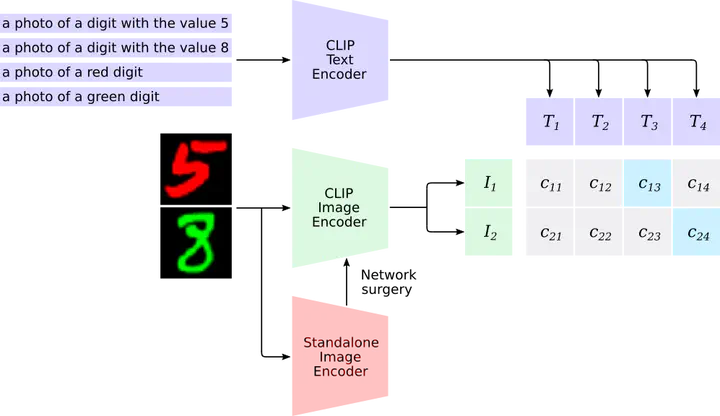

Framework for Caption-Driven XAI: Integrating target CNNs with CLIP via network surgery to identify semantic bias.

Framework for Caption-Driven XAI: Integrating target CNNs with CLIP via network surgery to identify semantic bias.Abstract

Robustness has become one of the most critical problems in machine learning (ML). The science of interpreting ML models to understand their behavior and improve their robustness is referred to as explainable artificial intelligence (XAI). One of the state-of-the-art XAI methods for computer vision problems is to generate saliency maps. A saliency map highlights the pixel space of an image that excites the ML model the most. However, this property could be misleading if spurious and salient features are present in overlapping pixel spaces. In this paper, we propose a caption-based XAI method, which integrates a standalone model to be explained into the contrastive language-image pre-training (CLIP) model using a novel network surgery approach. The resulting caption-based XAI model identifies the dominant concept that contributes the most to the models prediction. This explanation minimizes the risk of the standalone model falling for a covariate shift and contributes significantly towards developing robust ML models.

Patrick Koller, Amil V. Dravid, Prof. Dr. Guido Schuster, and Prof. Dr. Aggelos Katsaggelos

Accepted and presented at the IEEE ICIP 2025 Satellite Workshop: “Generative AI for World Simulations and Communications & Celebrating 40 Years of Excellence in Education: Honoring Prof. Aggelos Katsaggelos,” Anchorage, Alaska, USA, Sept 14, 2025.

BibTeX Citation

@INPROCEEDINGS{Koller_2025_CaptionXAI,

author={Koller, Patrick and Dravid, Amil V. and Schuster, Guido M. and Katsaggelos, Aggelos K.},

booktitle={2025 IEEE International Conference on Image Processing Workshops (ICIPW)},

title={Caption-Driven Explainability: Probing CNNS for Bias Via Clip},

year={2025},

volume={},

number={},

pages={663-667},

keywords={Explainable AI;Computational modeling;Machine vision;Zero shot learning;Surgery;Medical services;Debugging;Predictive models;Robustness;Convolutional neural networks;Multi-Modal Explainability;CLIP;Model Bias Detection;Zero-Shot Learning;Network Surgery},

doi={10.1109/ICIPW68931.2025.11386015}

}